|

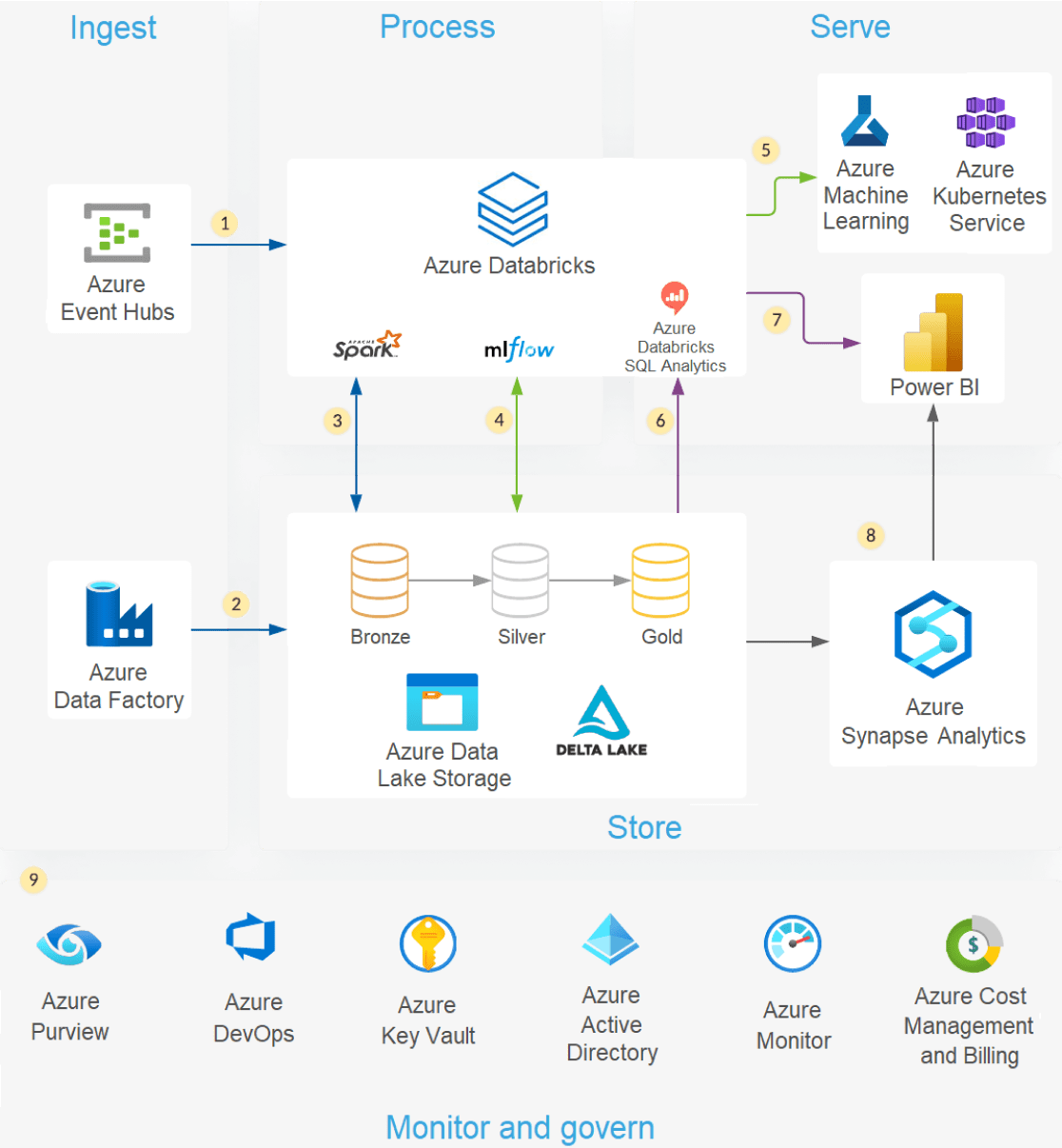

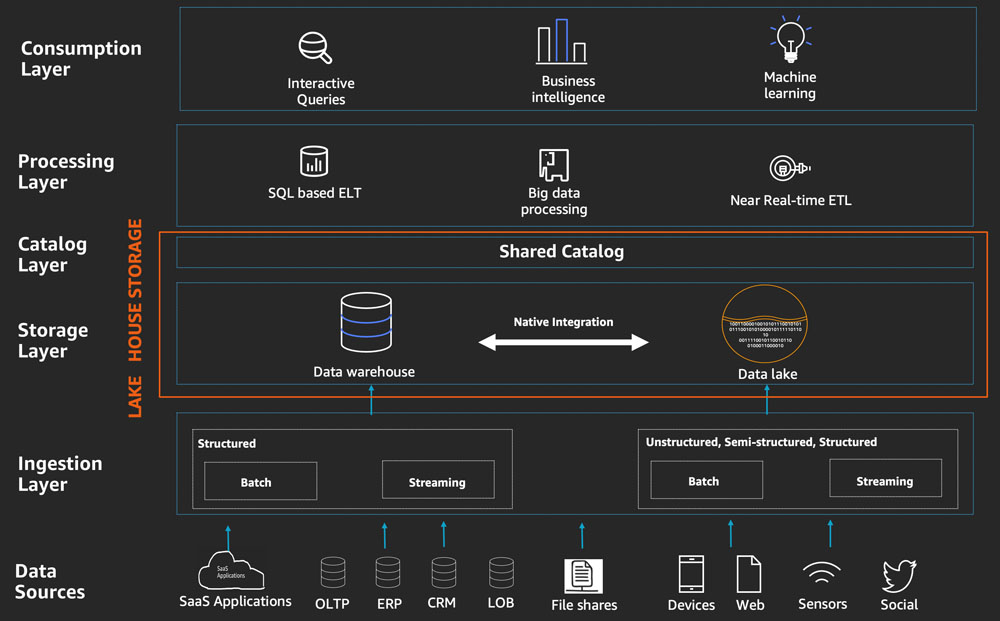

Data layouts, optimized for different tasks, allow end users to access data for machine learning applications, data engineering, and business intelligence and reporting. A unified governance model means you can track data lineage back to your single source of truth. The final tables should be designed to serve data for all your use cases. The final layer serves clean, enriched data to end users. Once your data has been thoroughly cleansed, it can be integrated and reorganized into tables designed to meet your particular business needs.Ī schema-on-write approach, combined with Delta schema evolution capabilities, means that you can make changes to this layer without necessarily having to rewrite the downstream logic that serves data to your end users. Data scientists and machine learning practitioners frequently work with data at this stage to start combining or creating new features and complete data cleansing. Once verified, you can start curating and refining your data. Data processing, curation, and integration Unity Catalog allows you to track the lineage of your data as it is transformed and refined, as well as apply a unified governance model to keep sensitive data private and secure. You can use Unity Catalog to register tables according to your data governance model and required data isolation boundaries. As you convert those files to Delta tables, you can use the schema enforcement capabilities of Delta Lake to check for missing or unexpected data. This first logical layer provides a place for that data to land in its raw format. Unity Catalog: a unified, fine-grained governance solution for data and AI.Īt the ingestion layer, batch or streaming data arrives from a variety of sources and in a variety of formats.Delta Lake: an optimized storage layer that supports ACID transactions and schema enforcement.The Databricks lakehouse uses two additional key technologies:

For more information, see Apache Spark on Azure Databricks Apache Spark enables a massively scalable engine that runs on compute resources decoupled from storage. For more information, see What is the medallion lakehouse architecture? How does the Databricks lakehouse work?ĭatabricks is built on Apache Spark.

This pattern is frequently referred to as a medallion architecture. Each layer of the lakehouse can include one or more layers. A data lakehouse can help establish a single source of truth, eliminate redundant costs, and ensure data freshness.ĭata lakehouses often use a data design pattern that incrementally improves, enriches, and refines data as it moves through layers of staging and transformation. A data lakehouse provides scalable storage and processing capabilites for modern organizations who want to avoid a isolated systems for processing different workloads, like machine learning (ML) and business intelligence (BI).

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed